Neva Lukan Medvešek

INTRODUCTION

What the following text will explore is not a comprehensive view of consciousness, thereby intelligence, but rather an exploration of an aspect of consciousness AI is missing and why it has not been or can not be achieved. The necessity of consciousness will be predicated on the premise of the Chinese room argument conclusion that intelligence, requiring semantics, can currently not be exhibited by an algorithm, regardless of how intelligently it may behave.

The article is found in slovenian as well.

Represents the field of philosophy.

DISCUSSION

How does semantics relate to consciousness?

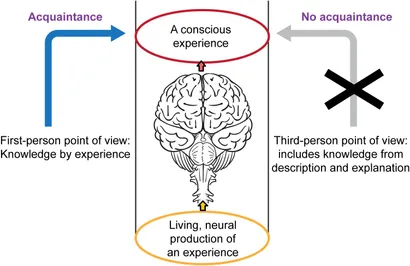

Semantics requires knowledge separate from syntax; the problem is that what we would call semantics is syntax masquerading as it. I would argue that semantics as definitions would not qualify, due to them being yet another syntax for the algorhythm, which does not understand the word but rather uses the syntaxes of the definition (i.e. values assigned to words and examples forming the definition) to return a value-based answer on those. I believe a strong case can be made that what makes us understand a word, and AI not being able to is phenomenal consciousness. That (phenomenal consciousness) is demonstrated in Frank Jackson’s (1972) “Knowledge Argument”.

Mary is confined to a black-and-white room, is educated through black-and-white books, and

through lectures relayed on black-and-white television. In this way she learns everything there is

to know about the physical nature of the world. She knows all the physical facts about us and our environment, in a wide sense of `physical’ which includes everything in completed physics,

chemistry and neurophysiology, and all there is to know about the causal and relational facts consequent upon all this, including of course functional roles.

Mary is released and sees a narcissus flower in good lighting for the first time, and comes to know what it is like to see yellow, something she allegedly did not know before, despite her omniscience with respect to physical facts. (Jackson 1986, p. 567)

Jackson runs his argument thus:1) Mary (before her release) knows everything physical there is to know about other people.

(2) Mary (before her release) does not know everything there is to know about other people

(because she learns something about them on her release); therefore

(3) There are truths about other people (and herself) which escape the physical story.

(1986, p. 568)

The experience dilemma is therefore concerned with the ontological status of the qualitative character-feel of experiences i.e. qualia. Resulted from physical properties but non-physical and inconsequential themselves.

Conceptualization within the field

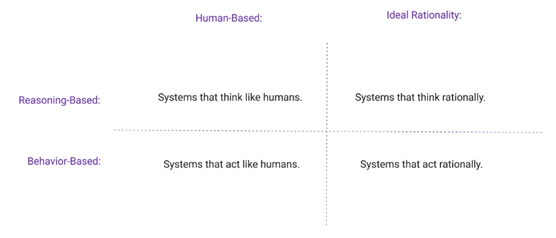

AI Philosophers and practitioners do not concern themselves with phenomenal consciousness but rather with cognitive consciousness (propositional states) and rationality through machine learning.

“For instance, McCarthy (1999) argues that making robots conscious is in principle within our grasp. However, it turns out that what McCarthy has in mind is robots’ capacity to have conscious thoughts (propositional attitudes), not experiences. He seems to join the group of people who declare conscious experience a mystery, and as a result, he questions not only the possibility of robots’ having conscious experiences, but also the desirability of producing robots with this capacity, assuming it were possible to do so. He seems to think that having conscious experiences is an option that robots with fully conscious thoughts could do without”.

(Aydede & Guzeldere, 2010, p 269)

CONCLUSION

Throughout many conceptions of consciousness, AI excels in some and is incapable of achieving others. The common conception of conscious AI is based on apparent self-consciousness, performance on tests, and its ever-increasing presence in one’s daily life. Yet despite it’s human like behaviour and claims of practitioners, it continues to lack true semantics, the subjective aspect of experience, what is it like sensations, something which not rutted in the physical properties, thus making it as of now impossible to implement, thus making AI not intelligent or eligible for it.

Citations

HANSEN, Mette Kristine. 2019. Internet Encyclopedia of Philosophy [online]. [no date], [no number], [cited March 25 2024, 20:37]. https://iep.utm.edu/cognitive-phenomenology/

VAN GULICK, Robert. 2022 (Winter edition).” The Stanford Encyclopedia of Philosophy [online]. [October 7 2022], [no number], [cited March 25 2024, 20:44]. https://plato.stanford.edu/archives/win2022/entries/consciousness/

BRINGSJORD, SELMER in GOVINDARAJULU Naveen Sundar. 2022 (Autumn edition). The Stanford Encyclopedia of Philosophy [online]. [7 July 2022], [no number], [cited 25 March 2024, 20:49]. https://plato.stanford.edu/archives/fall2022/entries/artificial-intelligence/

AYDEDE Murat & GUTELDERE Guven. 2010. Journal of Experimental & Theoretical Artificial Intelligence [online]. [9 November 2010], st. 3, [cited Mrch 25 2024, 21:02]. https://www.tandfonline.com/doi/abs/10.1080/09528130050111437

Quillbot, Grammar checker [cited April 24 2024]. https://quillbot.com/grammar-check

Image sources

Figure 1: CrisNYCa. 17. April 2018, Wikimedia, Le Penseur (The Thinker) in the garden of Musée Rodin, Paris. [cited 26.03.2024, 18:48], available at https://upload.wikimedia.org/wikipedia/commons/a/a5/Mus%C3%A9e_Rodin_1.jpg

Figure 2: Todd E Feinberg. 2018, [cited 26.03.2024, 19:38], https://www.frontiersin.org/files/Articles/537022/fpsyg-11-01041-HTML/image_m/fpsyg-11-01041-g006.jpg

Figure 3: : Russell and Norvig (1995, 2002, 2009 [cited 26.03.2024, 19:35], https://www.pi.exchange/hs-fs/hubfs/VISUALS%20-%20New%20frame%20(2).jpg?width=1320&name=VISUALS%20-%20New%20frame%20(2).jpg